To understand how and why View on AMAZON SSDs are different from spinning drives, we need to talk a bit about hard drives.

A hard drive stores data on a series of spinning magnetic disks called platters. There is an actuator arm to which read/write heads are attached. This arm positions the read/write heads above the correct area of the disk to read or write information.

Because the drive heads must be aligned with an area of the drive in order to read or write data (and the drive constantly spins), there is a non-zero wait time before data can be accessed.

The player may need to read from more than one location in order to start a program or load a file, which means it may have to wait for the platters to rotate into the correct position several times before it can complete the command.

If a drive is in a standby or low-power state, it may take several seconds longer for the drive to spin at full power and start working.

From the start, it was clear that hard drives could not achieve the speeds at which processors could work. Hard drive latency is measured in milliseconds, compared to nanoseconds for your usual processor.

One millisecond is equivalent to 1,000,000 nanoseconds, and it typically takes 10 to 15 milliseconds for a hard drive to find data on the drive and start reading it.

The hard drive industry has introduced smaller platters, on-disk memory caches, and higher spin speeds to counter this trend, but hard drives cannot spin faster.

Western Digital’s VelociRaptor family, which spins at 10,000 rpm, is the fastest set of drives ever built for the consumer market, while some company drives spin up to 15,000 rpm. The problem is that even the fastest-spinning disc.

How do Hard Drives Work?

A hard drive is a storage device that holds data in a computer. It is usually made up of one or more spinning disks, called platters, that store data in magnetic form. The drives are connected to the computer through either an IDE or SATA interface.

When you want to access data on a hard drive, the computer sends a signal to the drive telling it which platter and track to read. The drive then spins the platters until it reaches the correct track, at which point the read/write head moves across the disk to the correct location. The head reads or writes the data as required.

The speed at which a hard drive can spin its disks and move its head determines how fast it can transfer data. Most hard drives can spin their disks at 7200rpm or higher, and have an average seek time of around 12 milliseconds. This means they can typically transfer data at around 100MB/s.

How are SSDs different?

Solid-state drives are so-called because they don’t rely on moving parts or rotating disks. Instead, the data is saved in a NAND flash pool.

NAND itself is made up of so-called floating gate transistors. Unlike transistors used in DRAMs, which must be refreshed several times per second, NAND flash is designed to maintain its state of charge even when not powered. This makes NAND a type of non-volatile memory.

The diagram above shows a simple flash cell design. The electrons are stored in the floating gate, which then reads as charged “0” or uncharged “1”.

In NAND flash, a 0 means data is stored in a cell – it’s the opposite of how we usually think of a zero or one. The NAND flash is organized in a grid. The entire grid is called a block, while the individual rows that make up the grid are called a page.

Common page sizes are 2K, 4K, 8K, or 16K, with 128 to 256 pages per block. The size of the blocks therefore generally varies between 256KB and 4MB.

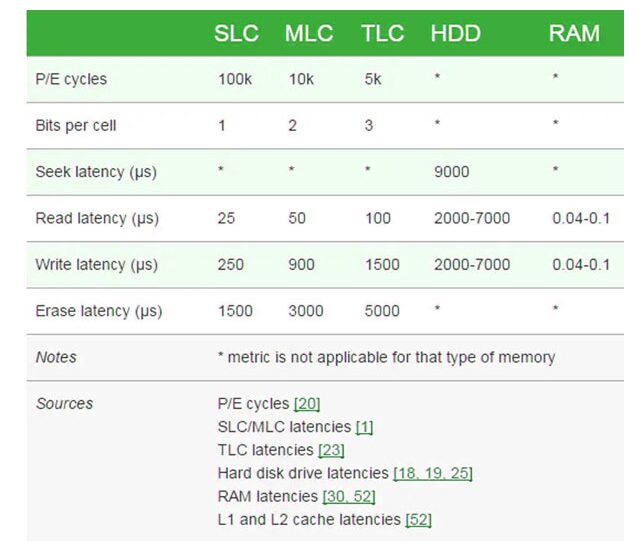

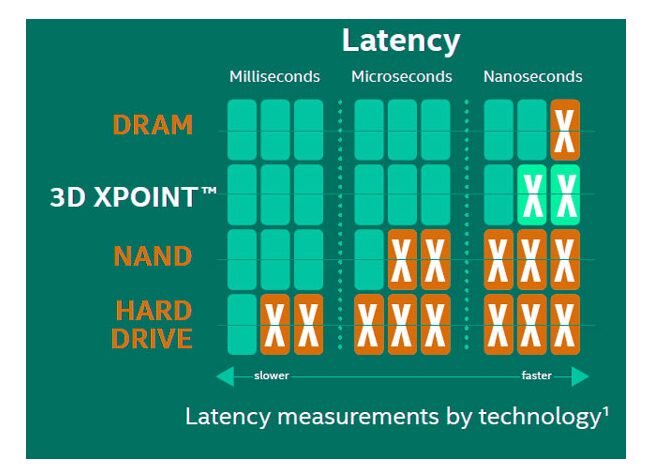

One of the advantages of this system should be immediately obvious. Since SSDs have no moving parts, they can operate at speeds much faster than a typical hard drive. The following graph shows typical storage media access latency, expressed in microseconds.

NAND is nowhere near as fast as main memory, but it is several orders of magnitude faster than a hard drive. Although write latencies are significantly slower for NAND flash memory than read latencies, they still exceed traditional spinning media.

There are two things to note in the table above. First, notice how adding more bits per cell of NAND has a significant impact on memory performance.

This is worse for writing than reading – the typical latency of a three-level cell (TLC) is 4 times worse than that of a single-level cell (SLC) NAND for reading, but 6 times worse for writing. The erasure latencies are also strongly affected.

The impact is also not proportional – TLC NAND is almost twice as slow as MLC NAND, although it only contains 50% more data (three bits per cell instead of two). This is also true for QLC readers, which store even more bits at varying voltage levels in the same cell.

The reason TLC NAND is slower than MLC or SLC is related to the way data enters and leaves the NAND cell. With SLC NAND, the controller only needs to know if the bit is a 0 or 1. With MLC NAND, the cell can have four values - 00, 01, 10 or 11.

With TLC NAND, the cell can have eight values. , and QLC has 16. To read the correct value from the cell, the memory controller must use an accurate voltage to determine if a particular cell is charged.

Read also: Best Motherboard For Ryzen 5 2400g

Read, write and erase

One of the functional limitations of SSDsis that if they can read and write data very quickly to an empty disk, overwriting the data is much slower.

This is because while SSDs read data at the page level (i.e. from individual rows in the NAND memory grid) and can write at the page level, assuming the surrounding cells are empty, they can only erase data at the block level.

This is because erasing NAND flash memory requires high voltage. Although you could theoretically erase NAND data at the page level, the amount of voltage required forces individual cells around cells that are rewritten. Clearing data at the block level alleviates this problem.

The only way for an SSD to update an existing page is to copy the contents of the entire block into memory, erase the block, and then write the contents of the old block + the updated page.

If the drive is full and no blank pages are available, the SSD should first find the blocks marked for deletion but not yet cleared, clear them, and then write the data to the page now erased.

This is why SSDs can become slower as they age: a near-empty drive is full of blocks that can be written immediately, a nearly full drive is more likely to be forced through the entire program / erase sequence.

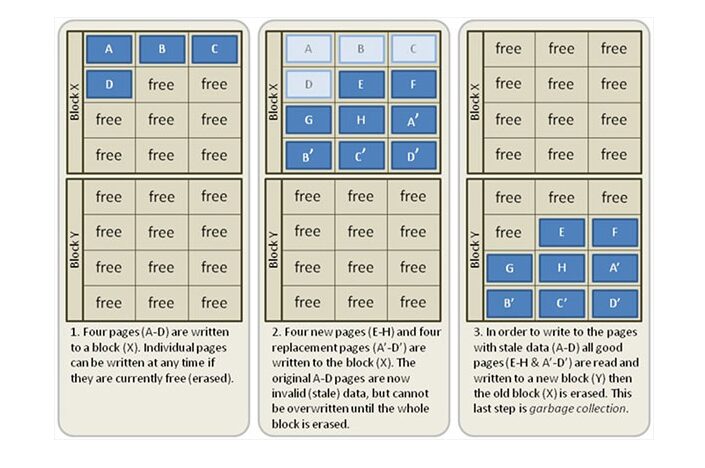

If you’ve used solid-state drives, you’ve probably heard of something called “garbage collection”.

Garbage collection is a background process that helps mitigate the impact on program / erase cycle performance by performing certain background tasks. The following image illustrates the steps in the waste collection process.

Note in this example that the reader took advantage of the fact that it can write empty pages very quickly by writing new values for the first four blocks (A’-D ‘). He also wrote two new blocks, E and H.

Blocks A through D are now marked as stale, meaning they contain information that the reader has marked as stale. During a period of inactivity, the SSD will move the fresh pages to a new block, erase the old block and mark it as free space.

This means that the next time the SSD needs to do a write, it can write directly to the now empty X block, rather than going through the program / erase cycle.

The next concept I want to talk about is TRIM. When you delete a Windows file on a typical hard drive, the file is not deleted immediately.

Instead, the operating system tells the hard drive that it can overwrite the physical area of the drive where that data was stored the next time it needs to write. That’s why it’s possible to undelete files (and why deleting files in Windows usually doesn’t free up a lot of physical disk space before you empty the Recycle Bin).

With a traditional hard drive, the operating system does not need to pay attention to where data is written or the relative state of blocks or pages. With an SSD, this is important.

The TRIM command allows the operating system to tell the SSD that it can skip rewriting some data the next time it performs a block erase. This reduces the total amount of data the drive writes and increases the longevity of the SSD.

Both reading and writing damage the NAND flash, but writing does much more damage than reading. Fortunately, block-level longevity is not an issue for modern NAND flash. More data on SSD longevity can be found here.

The last two concepts we want to talk about are wear leveling and writing amplification. Because SSDs write data to pages but erase data in blocks, the amount of data written to disk is always more than the actual update.

If you make a change to a 4KB file, for example, the entire block that the 4K files is in needs to be updated and rewritten. Depending on the number of pages per block and the size of the pages, you might end up writing 4MB of data to update a 4KB file. Garbage collection reduces the impact of write amplification, just like the TRIM command.

Wear leveling refers to the practice of ensuring that certain NAND blocks are not written and erased more often than others. While wear leveling increases the life expectancy and endurance of a disc writing to NAND equally, it can actually increase write amplification.

In other cases, to distribute writes evenly across the disk, it is sometimes necessary to schedule and erase blocks even if their contents have not actually changed. A good wear-leveling algorithm seeks to balance these impacts.

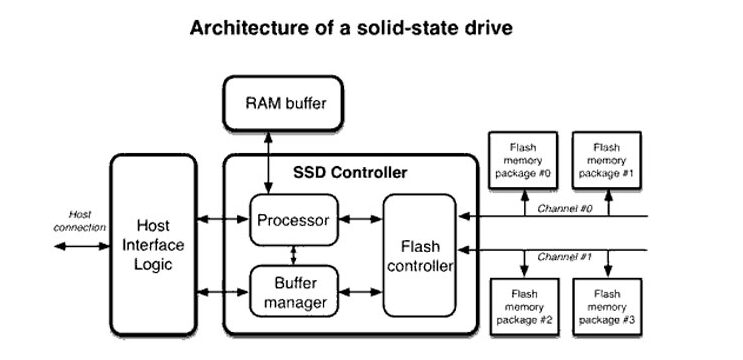

SSD controller

It should be obvious that SSDs require much more sophisticated control mechanisms than hard drives. This is not to dissolve magnetic media – I actually think hard drives deserve more respect than they get.

The mechanical challenges of balancing multiple nano-scale read-write heads over platters that spin at 5,400-10,000 rpm are not to be overlooked.

The fact that hard drives meet this challenge while pioneering new methods of recording on magnetic media and ending up selling drives at 3-5 cents per gigabyte is nothing short of amazing.

SSD controllers, however, are in a class of their own. They often have a DDR3 or DDR4 memory pool to help manage the NAND itself. Many drives also incorporate single-level cellular caches that act as buffers, increasing drive performance by dedicating fast NAND to read/write cycles.

Since the NAND flash memory of an SSD is typically connected to the controller through a series of parallel memory channels, one might think that the disk controller is doing some of the same load-balancing work as a high-end storage array.

lineup – SSDs don’t deploy RAID internally but carry SLC leveling, garbage collection, and cache management all have parallels in the big iron world.

Some drives also use data compression algorithms to reduce the total number of writes and improve the life of the drive. The SSD controller handles error correction, and the algorithms that check for single-bit errors have become more and more complex over time.

Unfortunately, we cannot go into details on SSD controllers as companies are locking down their various secret sauces. Much of NAND Flash’s performance is determined by the underlying controller, and businesses aren’t ready to lift the veil on how they do what they do for fear of giving a competitor an edge.

Interfaces

In the beginning, SSDs used SATA ports, just like hard drives. In recent years, we’ve seen a shift towards M.2 drives – very thin drives, several inches long, that fit directly into the motherboard (or, in a few cases, into a mounting on a PCIe riser card A Samsung 970 EVO Plus drive is shown below.

NVMe drives provide superior performance over traditional SATA drivers because they support a faster interface. Conventional SSDs connected via SATA achieve a convenient read/write speed of ~ 550MB / s. M.2 drives are capable of significantly faster performance in the 3.2Gb / s range.

Read also: Best Motherboard For Ryzen 5-3600x

The road to follow

NAND flash offers a huge improvement over hard drives, but it is not without drawbacks and challenges. Drive capacities and price per gigabyte is expected to continue to rise and fall respectively, but hard drives are unlikely to be overtaken by SSDs in terms of price per gigabyte.

Shrinking process nodes is a big challenge for NAND flash, while most hardware gets better as the node shrinks, NAND becomes more brittle. Data retention times and write performance are inherently lower for 20nm NAND than for 40nm NAND, although data density and total capacity are significantly improved.

So far we have seen discs with up to 96 layers on the market, and 128 layers seem plausible at this point. Overall, the switch to 3D NAND has improved density without reducing process nodes or relying on planar scaling.

So far, SSD manufacturers have achieved better performance by offering faster data standards, more bandwidth, and more channels per controller, plus the use of SLC caches we mentioned earlier. However, in the long run, it is assumed that NAND will be replaced by something else.

What form this other thing will take is still a matter of debate. Both magnetic RAM and phase change memory have come forward as candidates, although these two technologies are still in their infancy and face significant challenges in order to compete effectively with NAND.

Whether consumers would notice the difference is open. If you’ve gone from NAND to SSD and then to faster SSD, you probably know that the gap between HDDs and SSDs is much larger than the gap between SSDs and SSDs, even when you go from a relatively modest drive.

The improvement of access times from the millisecond to the microsecond is very important,

Intel’s XPoint 3D (marketed as Intel Optane) has established itself as a potential challenger to NAND flash, and the only current alternative technology in current production.

Optane SSDs don’t use NAND memory – they’re built using non-volatile memory that’s believed to be implemented similarly to phase-change RAM – but they deliver sequential performance similar to NAND flash drives.

current, but with significantly better performance for low drive queues.

The latency of the drives is also less than half that of NAND flash memories (10 microseconds, against 20) and their endurance is significantly higher (30 full writes per day, against 10 full writes per day for a high-end Intel SSD).

The first Optane SSDs debuted as excellent expansion modules for Kaby Lake and Coffee Lake. View on AmazonOptane is also available as stand-alone disks, and in various server roles for enterprise computing.

For now, Optane is still too expensive to match NAND flash, which benefits from significant economies of scale, but that could change in the future. NAND will remain king of the hill for at least the next 3-4 years.

But beyond that time frame, we might see Optane start to replace it in volume, depending on how Intel and Micron scale the technology and how 3D NAND flash continues to expand its cellular layers (the NAND 96 layers is shipped by multiple players), with roadmaps for 128 layers on the horizon.

What are SSD form factors?

Solid state drives come in a variety of form factors, each with its own advantages and disadvantages. The three most common form factors are 2.5-inch, M.2, and NVMe.

2.5-inch SSDs are the largest form factor and typically offer the highest capacity and best performance. They’re also the most compatible with laptops and desktop computers. M.2 SSDs are smaller and thinner than 2.5-inch drives, making them ideal for ultra-thin laptops.

They’re also more expensive than 2.5-inch drives. NVMe SSDs are the fastest type of SSD, but they’re also the most expensive and only compatible with certain types of computers.

Upgrading to an SSD

Upgrading to an SSD can be a great way to improve your computer’s performance. Here are a few things to keep in mind when you’re considering upgrading to an SSD:

- Make sure your computer is compatible with an SSD. Check your computer’s documentation or manufacturer’s website to see if it supports SSDs.

- Choose the right SSD for your needs. There are different types of SSDs available, so make sure you choose one that’s compatible with your computer and meets your storage needs.

- Back up your data before upgrading. Since upgrading will involve replacing your existing hard drive, make sure you have a backup of all your important files before proceeding.

- Follow the instructions carefully. Upgrading to an SSD is not difficult, but it’s important to follow the instructions that come with your particular drive carefully. This will help ensure a smooth and successful upgrade process.

FAQs

1. Is SSD better for gaming than HDD?

There are a few key factors to consider when determining whether SSD or HDD is better for gaming. One is cost—an SSD will typically be more expensive than an HDD. Another is capacity—an SSD usually offers less storage space than an HDD. And finally, there’s speed—an SSD can offer much faster data access and transfer speeds than an HDD.

So, which is better for gaming? It really depends on your needs and budget. If you have the money to spare, an SSD will give you a significant performance boost. But if you’re on a tight budget, an HDD may be the better option.

2. How many SSD Can a PC have?

Some people believe that you can only have one SSD in a PC, but this is not the case. You can have multiple SSDs in a PC, and they can be used for different purposes.

One way to use multiple SSDs in a PC is to use one for the operating system and another for data storage. This can help improve performance, as the operating system will have quick access to the SSD while data storage will be on a separate drive.

Another way to use multiple SSDs is to set up a RAID array. This can provide redundancy and improve performance, as data will be spread across multiple drives.

You can also use an SSD as a cache for a traditional hard drive. This can improve performance by allowing the hard drive to access data from the SSD quickly.

3. What SSD size is enough?

How much space do you currently use? What are your plans for the future? Are you someone who likes to keep everything just in case, or do you prefer to only keep what you need on hand?

If you’re not sure how much space you currently use, take a look at your hard drive utilization. If it’s close to full, then you’ll probably want at least 256GB of storage. If it’s less than half full, then 128GB should be plenty. Of course, these are just estimates – your mileage may vary.

As for the future, it’s always hard to say. If you think you might need more storage down the road, it might be worth getting a larger SSD now. That way, you won’t have to go through the hassle of upgrading later on.

Ultimately, it comes down to personal preference and needs. Some people are perfectly happy with 128GB of storage, while others find that they need more room to breathe and opt for 256GB or even 512GB drives. It all depends on how much stuff you have and how much stuff you plan on keeping.

Conclusion

In conclusion, SSDs are a great option for those looking for faster data access speeds and lower power consumption. Although they cost more than HDDs, the benefits of an SSD often outweigh the cost. If you’re considering upgrading to an SSD, make sure to do your research so that you know which type of SSD is right for your needs. Thanks for reading!